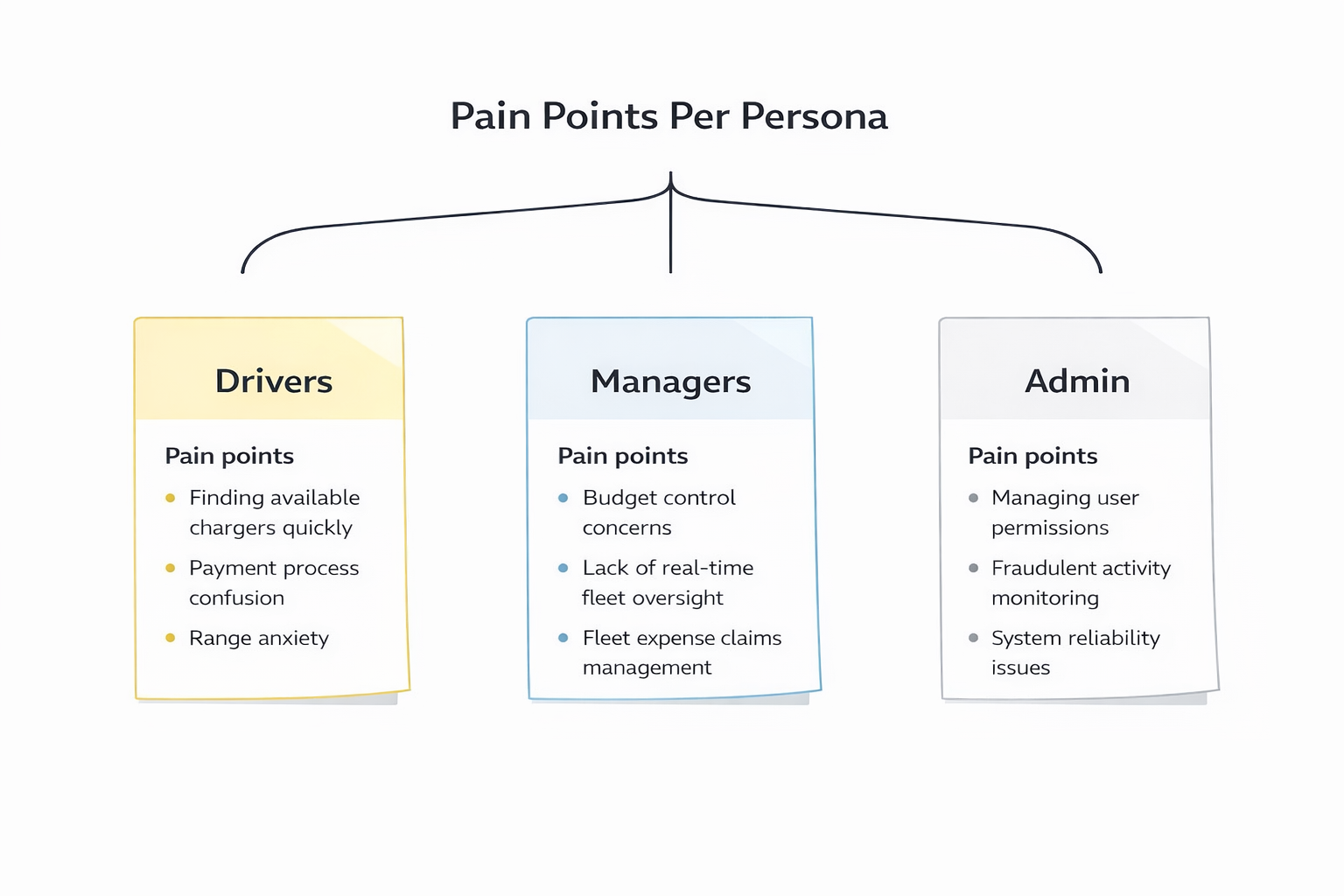

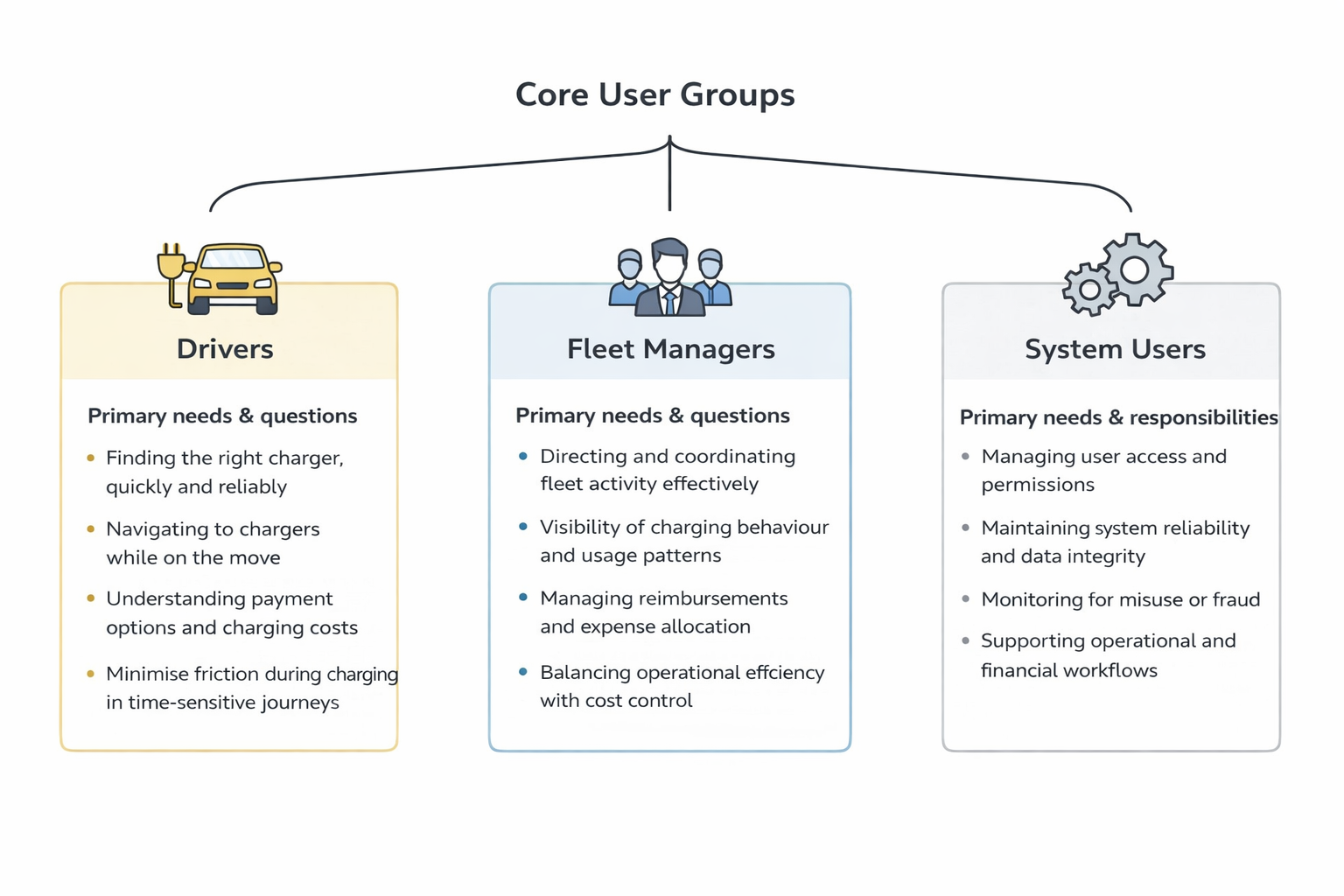

As we expanded into B2B fleet, the product needed to serve a new and distinct set of users: fleet managers, drivers, and administrative stakeholders, each with different goals, authorities, and frustrations. Our prior consumer-facing work gave us limited foundation to build on. The business had knowledge about these users, but it was fragmented across teams and had never been formally structured or validated against real customer input.

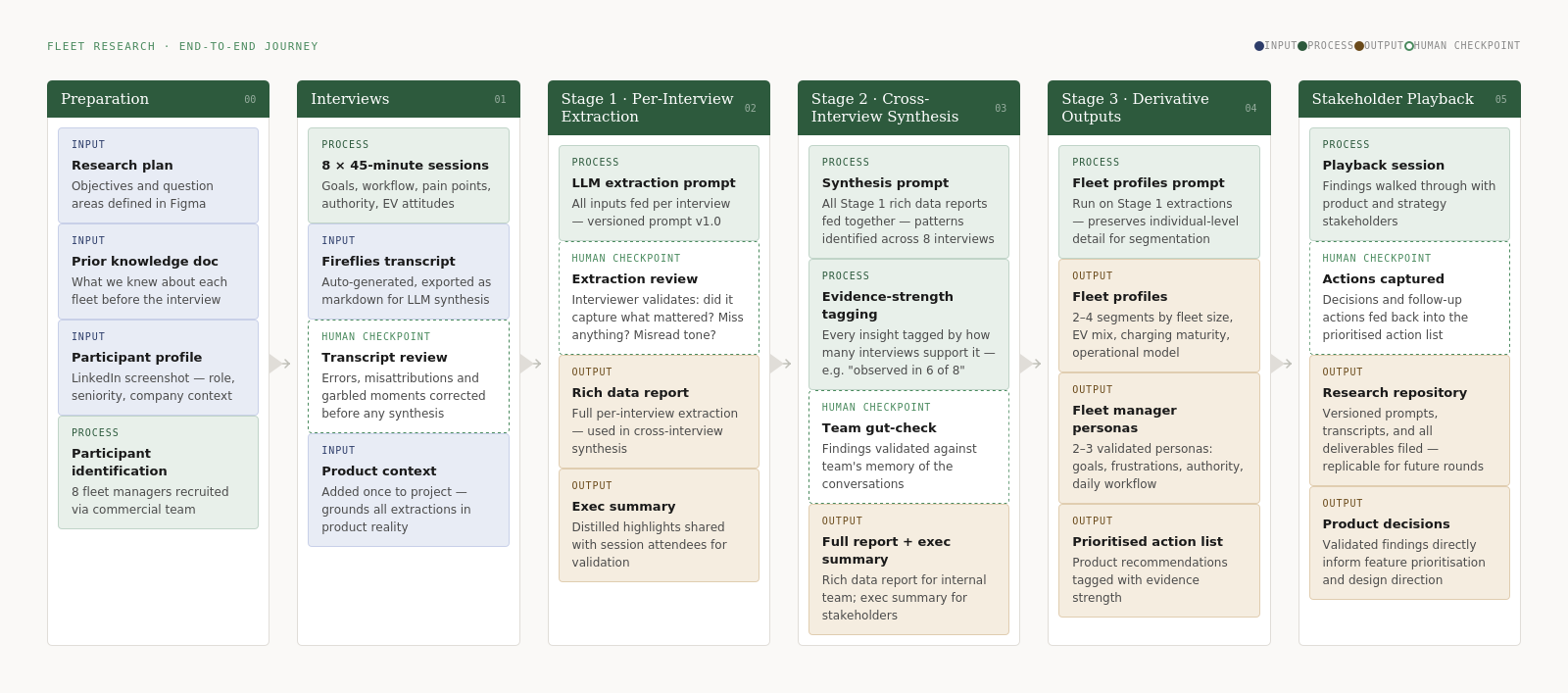

Eight 45-minute interviews with fleet managers gave us the depth to reach thematic saturation — enough to validate assumptions and change product decisions with confidence.

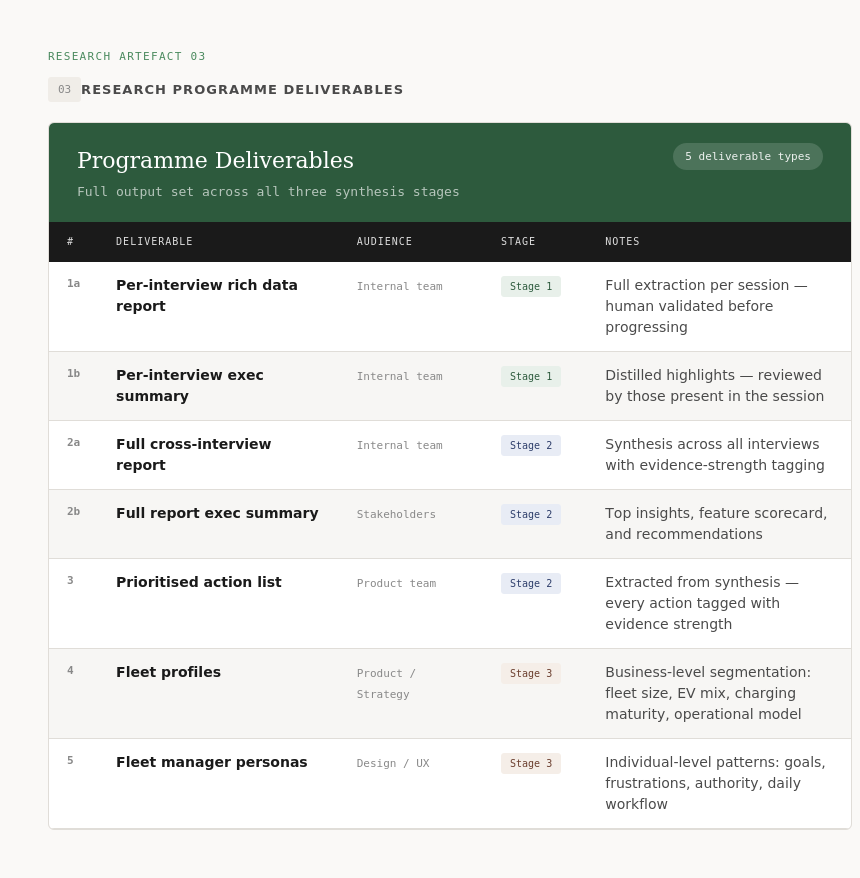

This project set out to close that gap, not just by producing personas, but by building the research infrastructure to validate and evolve them over time.

The research was initiated before any fleet-specific data was available, which meant we couldn't rely on analytics or prior user research as a baseline. The project also ran in parallel with live product delivery, so the research system had to be lightweight enough to run without slowing the team down. Collaboration with the commercial team was essential as they held the closest existing knowledge of fleet customers and were key to framing the right questions.

Defined objectives, question areas, and participant criteria in collaboration with the commercial team.

Identified fleet manager participants with support from commercial, selecting for range across fleet size and operational model.

Structured interviews to surface goals, frustrations, daily workflows, decision-making authority, and attitudes toward EV adoption.

Reviewed and corrected raw transcripts for accuracy before synthesis, ensuring data quality at the point of entry.

Replace siloed commercial instinct with structured, evidence-backed customer understanding accessible to the whole team.

Move beyond impressionistic findings. Every insight tagged by how many interviews supported it, so the team could distinguish established patterns from emerging signals.

The personas and fleet profiles needed to be reusable and evolvable, built on a versioned, replicable workflow rather than a one-off research sprint.

Qualitative research is only as useful as the rigour behind it. Every insight was tagged by how many interviews supported it, turning "people said" into "5 of 7 participants said." That distinction changed how confidently the team could act on findings.

The LLM handled synthesis at scale, but a human reviewed every extraction before it moved to the next stage. The tool accelerated the work, it didn't replace the critical thinking.

Versioned prompts, structured transcripts, and a filed research repository meant every interview added to a growing asset rather than a one-off report. The system was designed to get stronger over time.

Proto personas were starting hypotheses. Validated personas were the output of evidence. The principle throughout was that the work should evolve with the product, not sit in a deck and go stale.

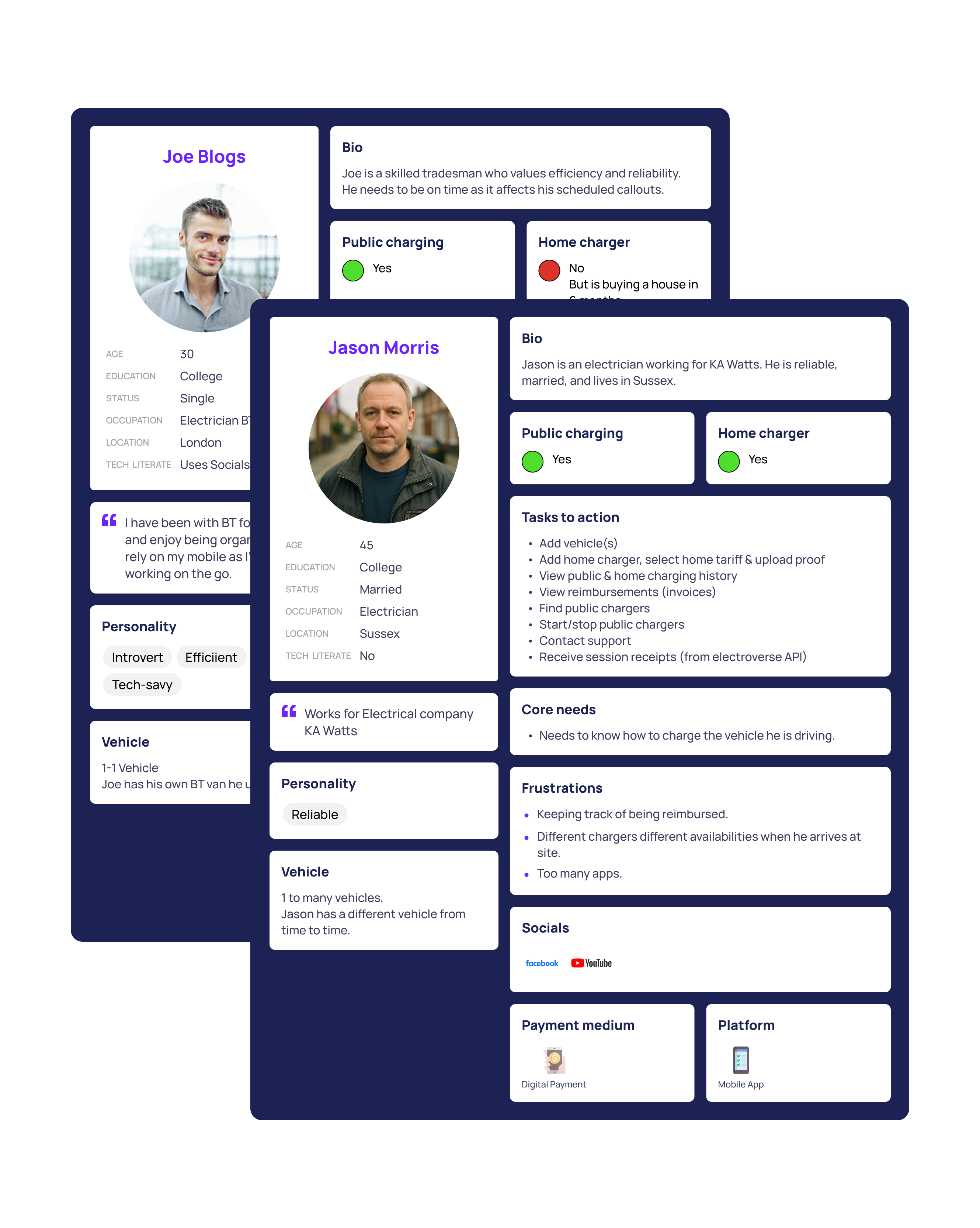

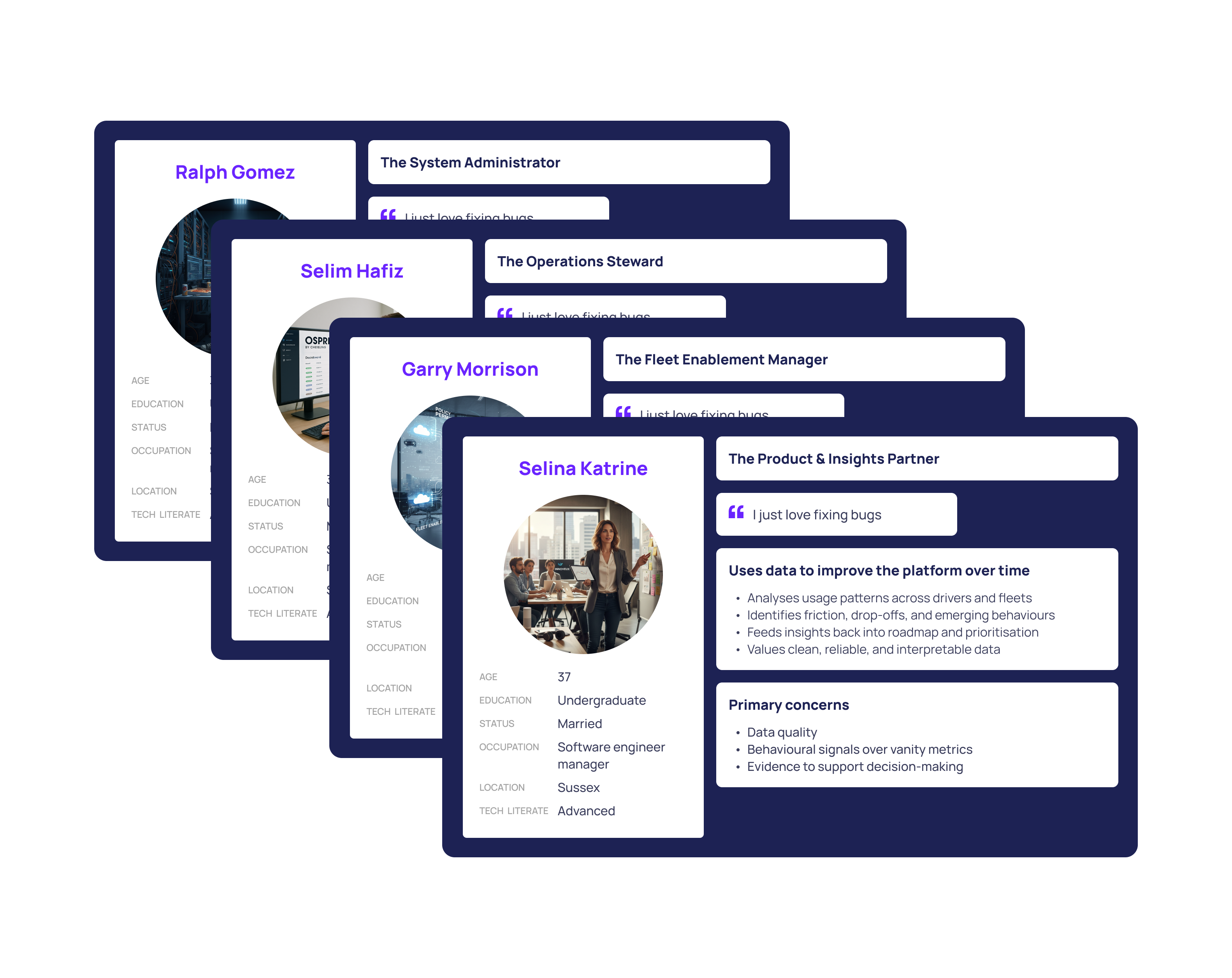

With research learnings in hand, the proto personas development meant these transitioned into detailed architypes that recognised core differences between user tasks, needs and frustrations through real user insights. They actively informed key journeys in the product suck as bulk actions to reduce repeat tasks from an actions panel.

I began with proto personas based on what reasonable assumptions we could make from commercial knowledge to give us a starting point. This working foundation enabled designing for the core audience from an informed stance and ready to be shaped by data.

We reached out to users and conducted email surveys and ran in depth interviews to validate and direct the proto-personas into being reliable and accurate compasses that would guide the product. These steps helped us gain insights about the users mental models of a day in their life and ultimately enabling us to have a more user centric approach.

A replicable, LLM-assisted research system that transformed raw qualitative interviews into structured, evidence-backed fleet intelligence. Outputs included distinct fleet profiles for product and strategy, validated fleet manager personas for design, a prioritised action list tagged by evidence strength, and a versioned research repository so future interviews extend the work rather than restart it. The system was shared with stakeholders via a structured playback session, with findings directly informing product direction.

Encoding research structure upfront, with clear objectives, consistent transcript format, and versioned prompts, made the difference between a synthesis that held up to scrutiny and one that didn't. The LLM was only as good as the inputs and the human judgment applied at every checkpoint. Running validation alongside extraction, rather than at the end, caught errors before they compounded through the analysis. The most valuable shift was treating the system as a persistent research asset, one that gets stronger with each new interview, rather than a project with a fixed endpoint.

For enquiries about product design roles or collaborations, feel free to get in touch.

Some work is subject to confidentiality and can’t be shared publicly, but I’m happy to discuss further examples on request. I aim to respond within one business day.